Introduction

In 2026, data leadership is often defined by the velocity at which information can be converted into autonomous action, whereas previously it was all about the volume of information the organisation stored. And yet for data practitioners and digital transformation leaders, the central challenge remains the same. How do we grant teams the autonomy to innovate without losing the governance required for integrity?

This article explores the strategic transition from monolithic, centralised fortresses to the agile, distributed ecosystems of the data mesh. We will examine why the traditional single source of truth is evolving into a single point of discovery, and how treating data as a product is the essential prerequisite for scaling internal infrastructure.

Centralised vs. Decentralised

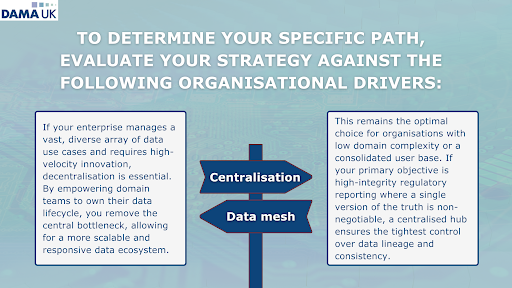

To provide a comprehensive view of how data works in the modern setting, we must examine the structural shift from monolithic repositories to distributed ecosystems. As organisations mature, the choice between centralised and decentralised architectures becomes less about technical preference and more about aligning data delivery with business velocity.

The Centralised Model: The Fortress of Consistency

The traditional centralised architecture, often manifested as a unified Data Warehouse or Lake, operates on the principle of consolidation. By pulling all enterprise information into a single location managed by a specialist team, organisations achieve a high degree of structural consistency. This model remains the gold standard for single source of truth requirements, such as audited financial reporting or regulatory compliance, where a single discrepancy can have significant legal ramifications.

However, this fortress approach often introduces a delivery bottleneck. Because a central team must oversee every ingestion pipeline and schema change, the time between a business question arising and the data being available (latency) increases as the organisation grows. While it offers unparalleled control, it often struggles to keep pace with the diverse, localised needs of modern business units.

The Data Mesh: Empowerment through Domain Ownership

In contrast, the Data Mesh represents a paradigm shift toward decentralisation. Rather than treating data as a byproduct to be collected, it treats data as a product to be managed by those who understand it best: the functional domains (e.g., Marketing, Logistics, or HR). In this model, the responsibility for data quality and availability shifts from a central IT office to the domain experts themselves.

This decentralisation is optimised for rapid innovation. By removing the central intermediary, domain teams can iterate on their data products in real-time, scaling complex ecosystems without waiting for global architectural approval. The Mesh succeeds when it is supported by a self-service platform that provides the necessary infrastructure, such as storage and identity management, allowing creators to focus on the value of the data itself rather than the underlying plumbing.

Architecting for the Agentic Era

The 2026 conversation around architecture has evolved beyond human consumption of data toward the Agentic Era. According to McKinsey & Company, modern enterprise architecture is now being redesigned to support autonomous AI agents that do not just visualise data but act upon it.

For these agents to function effectively, data can no longer be trapped in static, opaque silos. It must be modular, highly discoverable, and accompanied by rich metadata that an AI can interpret without human intervention. This shift necessitates a Data Fabric or Mesh approach where data is exposed via standardised interfaces, ensuring that as AI agents become more prevalent, they can traverse the enterprise landscape to find and utilise the exact information required for autonomous decision-making. In this new era, the single source of truth appears to be getting replaced by a single point of discovery, where the focus is on how easily an autonomous system can find and trust a decentralised data product.

Treating Data as a Product

Transitioning from centralised to decentralised requires a fundamental shift in mindset. At the heart of this evolution is the transition from viewing data as a passive resource to treating it as a high-quality, consumable product.

Traditionally, organisations have treated data as a natural resource, something to be extracted and stored (in bulk!). However, this often results in data swamps where value is buried under layers of inconsistency. Drawing from sources such as Snowplow’s philosophy, a true data product must meet four critical criteria to be functional in a modern ecosystem:

- Discoverable: It must be easily found via a central catalogue or search interface.

- Addressable: It must have a unique, permanent location where consumers can access it programmatically.

- Trustworthy: It must include built-in quality guarantees and Service Level Objectives (SLOs).

- Self-Describing: The data must carry its own context, allowing a user (or an AI agent) to understand its meaning without needing to call the original developer.

Shifting Focus to the Consumer

The primary advantage of Product Thinking is that it forces data creators to move away from the collect everything mentality and toward creating value for the consumer. In a professional data education context, we define this as a shift in accountability. When data is a product, the definition of ‘done’ is no longer when the data is stored in a cloud bucket but that it is only done when the end-user (whether a data scientist or a generative AI model) can successfully derive an insight from it. This consumer-centric approach reduces the friction of data discovery and drastically accelerates the time-to-insight for the entire enterprise.

Metadata and schemas

To ensure that decentralisation doesn't lead to disorganisation, these individual data products must remain interoperable. This is achieved through the rigorous application of metadata and schemas. Doing so means that organisations can ensure that even if different departments (domains) are building their own products, they are all using a common language.

What's more, to support these data products, the organisational infrastructure can benefit from evolving into a platform as a product. This model shifts the central data team’s role from manual data processing to engineering the self-service tools that empower domain teams. By providing standardised CI/CD pipelines, automated access management, and integrated data cataloguing, the platform significantly reduces the cognitive load on domain experts, allowing them to focus on business logic rather than technical plumbing. This internal ecosystem acts as a data fabric, ensuring that decentralised nodes remain interoperable and governed by design.

Creating a hybrid reality

For most organisations, the ideal state is not a pure architectural extreme, but a governed mesh. This means a hybrid model that balances local agility with global standards.

Transitioning to a mesh architecture is as much a cultural challenge as a technical one. Many organisations fail in this transition because they underestimate the cognitive load placed on domain teams. Without a platform as a product to provide the necessary tooling, teams often revert to legacy silos. Furthermore, success requires a high baseline of data literacy. Without it, decentralised ownership can quickly degrade into fragmented, low-quality data outputs.

Future-Proofing

The ultimate goal of this modern framework is to prepare the enterprise for the Agentic Era. In 2026, data must be structured not just for human dashboards, but for autonomous AI agents. These LLM-driven agents require data products that are modular, self-describing, and highly discoverable to navigate the mesh and execute complex tasks without manual oversight. By building a governed mesh today, you are essentially creating the digital map that your future AI workforce will use to drive enterprise-wide value.

Sources:

https://snowplow.io/lp/success-starts-with-treating-data-as-a-product

https://www.informatica.com/resources/articles/data-mesh-governance-explained.html

.png)